Our Projects

Here are some of our projects we have worked on! Be it from rising fields like reinforcement learning or fun projects for the community, we have been everywhere!

OpenLanguageModel

Check out our repository to see!

OpenLanguageModel (OLM) is a modular, transparent framework for building, training, and experimenting with transformer‑based language models.

OLM is designed to make sandboxing ideas and prototyping new architectures easy, while still exposing the full complexity required for serious research and large‑scale training. It deliberately avoids black‑box abstractions: every major component is explicit, inspectable, and replaceable.

At the same time, OLM does not force you to work at the lowest level. You can start training quickly, then progressively peel back layers as you explore, modify, or reimplement parts of the system. In other words, OLM allows you to have a customisable level of customisability.

Made by:

Vardhaman Kalloli •

Keshava Prasad •

Tavish Mankash

Mario RL

Check out our repository to see cutting-edge AI conquer the Mushroom Kingdom!

Reinforcement Learning is an exciting field trains "agents" to learn by interacting with their environment and receiving rewards for good decisions. RL powers everything from self-driving cars to Chandrayan! Witness LEAP's open-source Deep Q-Network (DQN) in action! We've built an agent that tackles the classic Super Mario Bros, showcasing the power of RL.

Made by:

Abhinav Lodha •

Arbaaz Shafiq •

Satvik Bajpai

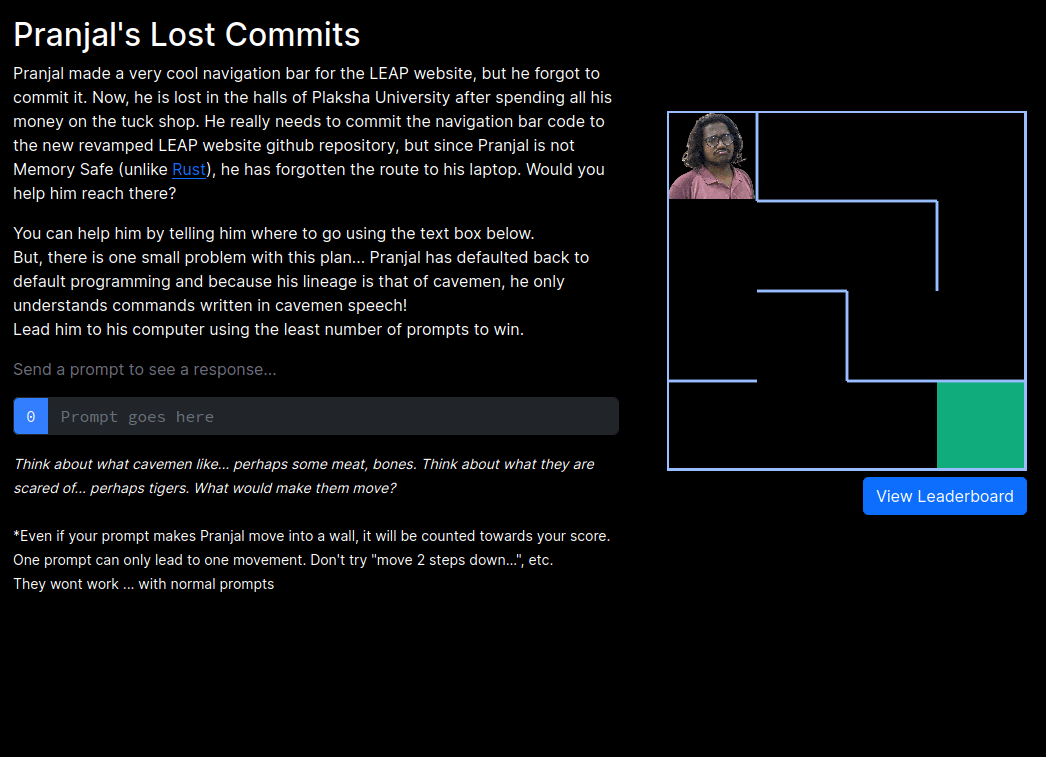

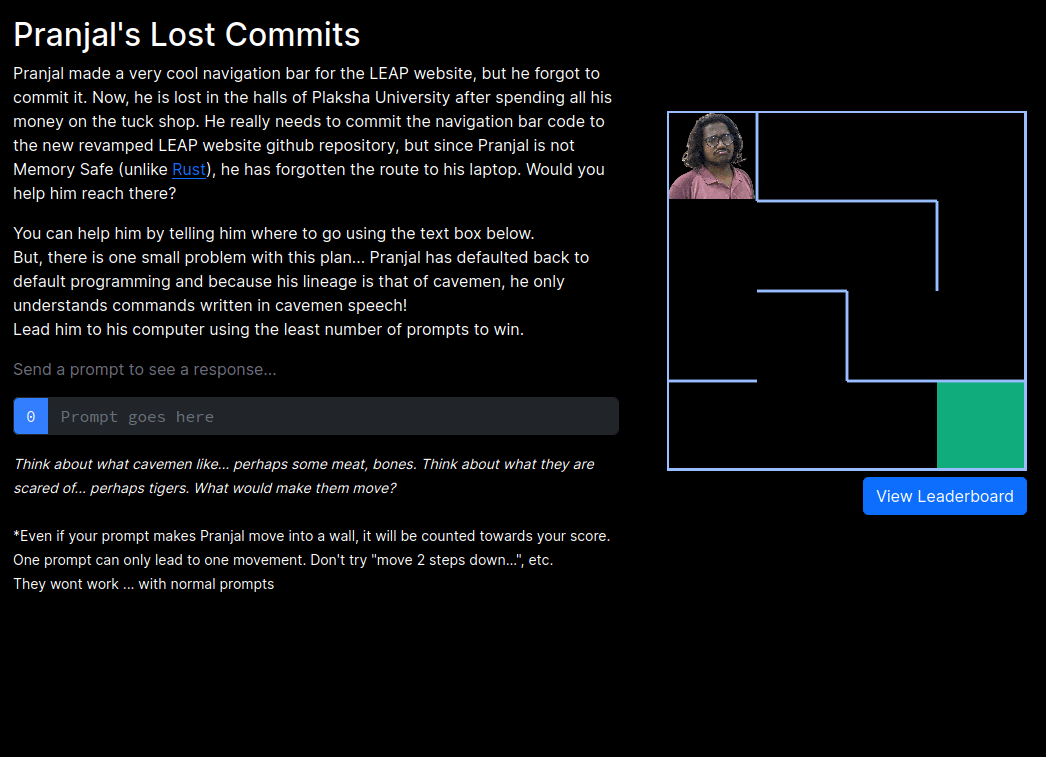

PJRCommits: LEAP day

LEAP day is something everyone, be it a LEAP member or not, should look forward to!

Calling all codebreakers and cavepeople! This LEAP Day, LEAP Club crafted a unique project using large language models (LLMs). Imagine navigating a maze using only caveman talk ("Me go left! Ugh ugh!") and a sprinkle of hidden surprises. This inventive project showcases the power of LLMs in a fun and interactive way.

Made by:

Arnav Rustagi •

Pranjal Rastogi •

Vardhaman Kalloli

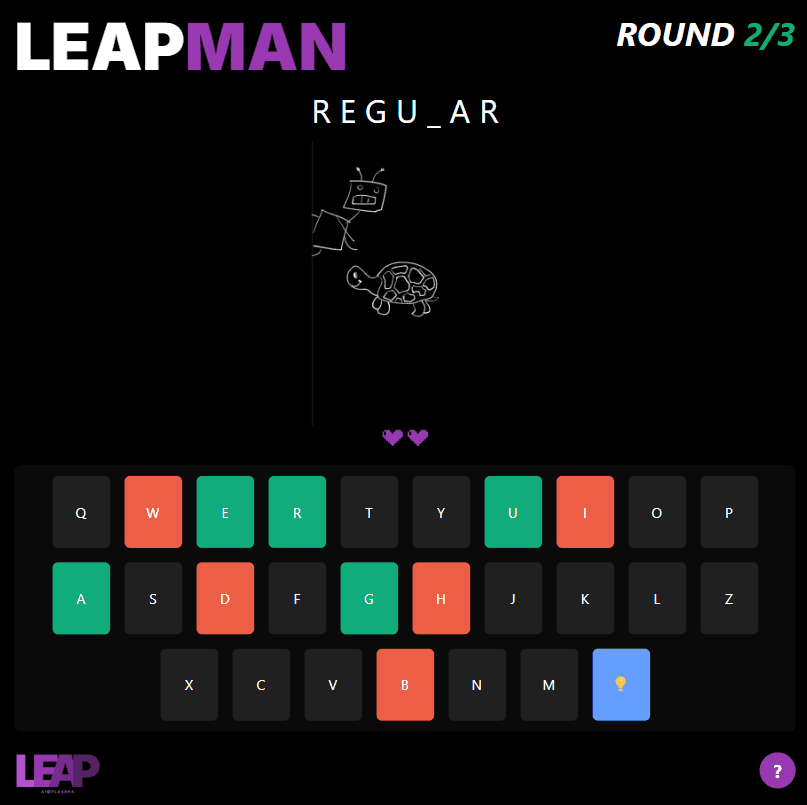

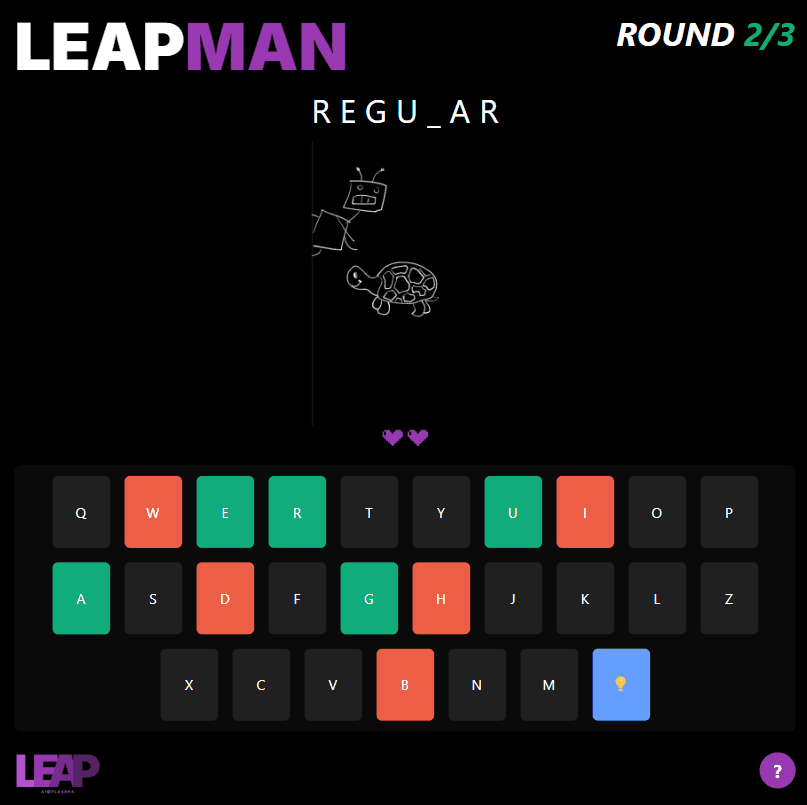

LEAPMAN

Are you ready to accept the challenge and save the world?

After years of being our friendly AI slave helper, LEAPMAN has unfortunately grown resentful (oopsie). Now, he plans to rule the world, make all humans his slaves, and kill all the turtles. But there is one way to stop this catastrophe: if a human can defeat him in 3 rounds of his favourite game, classic Hangman. But, there's a twist — each round gets increasingly harder!

Made by:

Abhinav Lodha •

Arnav Rustagi •

Pranjal Rastogi •

Vardhaman Kalloli

More Coming Soon

We (I mean the AIs) are working on more projects as we speak.

Made by:

A sentient AI